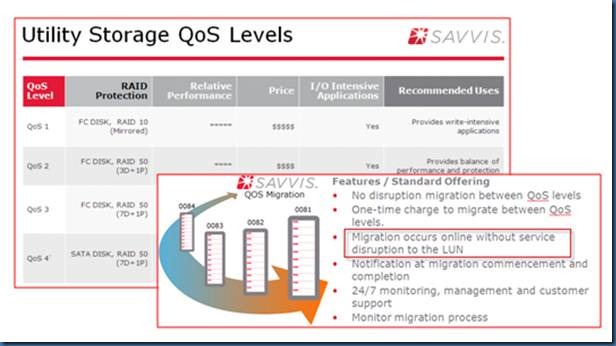

Here is what SAVVIS offers in terms of storage.

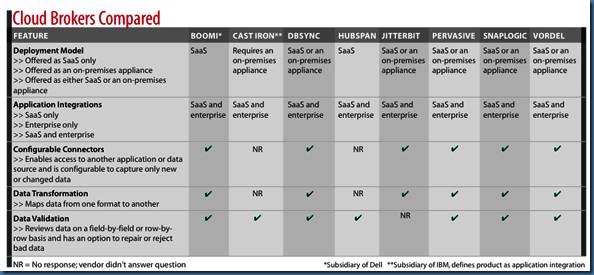

6 Reasons SaaS May Mean A Return To Silos

Don't want to revisit the bad old days? Here's how to keep application integration on track

http://www.informationweek.com/news/cloud-computing/software/231002351

The #1 Cloud Integration Company™ Cast Iron Systems, an IBM Company

providing the simplest and most widely used solution for integrating SaaS and cloud applications with the enterprise in days.

The #1 Integration Cloud Dell Boomi

Boomi AtomSphere® allows you to connect any combination of Cloud, SaaS, or On-Premise applications with no appliances, no software, and no coding.

OpenStack turns 1. What’s next?

By Derrick Harris

OpenStack, the open-source, cloud-computing software project founded by Rackspace and NASA, celebrates its first birthday tomorrow. It has been a busy year for the project, which appears to have grown much faster than even its founders expected it would. A year in, OpenStack is still picking up steam and looks not only like an open source alternative to Amazon Web Services and VMware vCloud in the public Infrastructure as a Service space, but also a democratizing force in the private-cloud software space.

OpenStack, the open-source, cloud-computing software project founded by Rackspace and NASA, celebrates its first birthday tomorrow. It has been a busy year for the project, which appears to have grown much faster than even its founders expected it would. A year in, OpenStack is still picking up steam and looks not only like an open source alternative to Amazon Web Services and VMware vCloud in the public Infrastructure as a Service space, but also a democratizing force in the private-cloud software space.

Let’s take a look at what happened in the past year — at least what we covered — and what to expect in the year to come.

OpenStack year one

- July 19: OpenStack launches.

October

- Oct. 21: OpenStack announces “Austin” release; promises production readiness by Jan. 2011.

- Oct. 22: Microsoft partners with Cloud.com to bring Hyper-V support to OpenStack.

- Oct. 25:Canonical CEO announces that Ubuntu Enterprise Cloud operating system will support OpenStack.

January

- Jan. 18: Internap announces first (non-Rackspace) OpenStack-based public cloud-storage service.

February

- Feb. 3: OpenStack releases “Bexar” code and new corporate contributors, including Cisco.

- Feb. 10: Rackspace buys Anso Labs, a software contractor that wrote Nova, the foundation of OpenStack Compute, for NASA’s Nebula cloud project.

March

- March 3: OpenStack alters Project Oversight Committee process in response to complaints over Rackspace dominance.

- March 8: Rackspace launches Cloud Builders, an OpenStack service/consultancy offering leveraging the expertise is acquired with Anso Labs.

April

- April 7: Dell announces a forthcoming public cloud service based on OpenStack software.

- April 15: OpenStack releases “Cactus” code, which incorporates critical new features for enterprises and service providers.

- April 26: CloudBees extends its formerly hosted-only Java Platform as a Service to run on OpenStack-based clouds.

- April 29: AT&T details at the OpenStack Development Summit plans to build a private cloud based on OpenStack. Cisco’s involvement also shapes up at the Summit, as Lew Tucker details Network-as-a-Service proposal.

May

- May 3: Rackspace announces it will close the Slicehost cloud service to focus all IaaS development efforts on OpenStack.

- May 4: Internap announces first-(non Rackspace) OpenStack-based public IaaS offering.

- May 25: Citrix unveils plans for Project Olympus, the foundation of a forthcoming commercialized OpenStack distribution.

July

- July 11: Piston Cloud Computing, led by former NASA Nebula chief architect, announces funding and forthcoming OpenStack-based software and services.

- July 12: Citrix buys private-cloud startup (and OpenStack contributor) Cloud.com, promises tight integration between Cloud.com’s CloudStack software and Project Olympus.

Although the new code, contributors and ecosystem players came fast and furious, OpenStack wasn’t without some controversy regarding the open-source practices it employs. Some contributors were concerned with the amount of control that Rackspace maintains over the project, which led to the changes in the voting and board-selection process. Still, momentum was overwhelmingly positive, with even the federal government supposedly looking seriously at OpenStack as a means to achieving one of its primary goals of cloud interoperability.

What’s next

According to OpenStack project leader Jonathan Bryce, the next year for OpenStack likely will be defined by the creation of a large ecosystem. This means more software vendors selling OpenStack-based products — he said Piston is only the first-announced startup to get funding — as well as implementations. Aside from public clouds built on OpenStack, Bryce also thinks there will be dozens of publicly announced private clouds build atop the OpenStack code. Ultimately, it’s a self-sustaining cycle: More users means more software and services, which mean more users.

There’s going to be competition, he said, but that’s a good thing for the market because everyone will be pushing to make OpenStack itself better. The more appealing the OpenStack source code looks, the more potential business for Rackspace, Citrix, Piston, Dell, Internap and whoever else emerges as a commercial OpenStack entity.

If this comes to fruition, it’ll be a fun year to cover cloud computing and watch whether OpenStack can actually succeed on its lofty goals of upending what has been, up until now, a very proprietary marketplace.

Image courtesy of Flickr user chimothy27.

source: Gigaom

Cisco Enhances Cloud Computing

Cisco (NASDAQ: CSCO) has announced new technology designed to increase the efficiency and security of cloud-based networks, including private, public and hybrid clouds.

New additions to the Cisco product portfolio support three key cloud computing requirements: data center virtualization, network performance and security, and span the entire cloud infrastructure, from the end-user and branch office, through the network to the data center, said Cisco.

UCS Manager, which manages all system configuration and operations for both UCS blade servers and rack-mount servers, provides a robust and open Application Programming Interface (API) for integration with other management software. Cisco is now introducing VMware vCenter for the Cisco Unified Computing System and UCS Express on Cisco Integrated Services Routers (ISRs), a unified virtualization management platform which enables IT organizations to organize, rapidly provision and configure the entire virtualized IT environment across branch offices and the data center. This will help customers reduce operating costs, improve operational automation, and deliver higher levels of security.

"The Cisco Unified Computing System unites compute, network, storage access, and virtualization resources in a single energy efficient system. In less than two years, more than 5,400 customers spanning all industries and workloads have deployed the Cisco Unified Computing System, making it one of the world's favorite foundations for building private and public clouds.

Mainframes can find new life hosting private clouds

With a bit of touching up and reconfiguring, a mainframe can be an easy and cost-effective way to host a private cloud

By Tam Harbert | Computerworld

Mention cloud computing to a mainframe professional, and he'll likely roll his eyes. Cloud is just a much-hyped new name for what mainframes have done for years, he'll say.

"A mainframe is a cloud," contends Jon Toigo, CEO of Toigo Partners International, a data management consultancy in Dunedin, Fla.

[ Get the no-nonsense explanations and advice you need to take real advantage of cloud computing in InfoWorld editors' 21-page Cloud Computing Deep Dive PDF special report. | Stay up on the cloud with InfoWorld's Cloud Computing Report newsletter. ]

If you, like Toigo, define a cloud as a resource that can be dynamically provisioned and made available within a company with security and good management controls, "then all of that exists already in a mainframe," he says.

Of course, Toigo's isn't the only definition of what constitutes a cloud. Most experts say that a key attribute of the cloud is that the dynamic provisioning is self-service -- that is, at the user's demand.

But the controlled environment of the mainframe, which is the basis for much of its security, traditionally requires an administrator to provision computing power for specific tasks. That's why the mainframe has a reputation as old technology that operates under an outdated IT paradigm of command and control.

It's also one of the reasons why most cloud computing today runs on x86-based distributed architectures, not mainframes. Other reasons: Mainframe hardware is expensive, licensing and software costs tend to be high, and there is a shortage of mainframe skills.

Nevertheless, mainframe vendors contend that many companies want to use their big iron for cloud computing. In a CA Technologies-sponsored survey of 200 U.S. mainframe executives last fall, 73 percent of the respondents said that their mainframes were a part of their future cloud plans.

And IBM has been promoting mainframes as cloud platforms for several years. The company's introduction last year of the zEnterprise, which gives organizations the option of combining mainframe and distributed computing platforms under an umbrella of common management, is a key part of IBM's strategy to make mainframes a part of the cloud, say analysts.

The company set the stage 10 years ago when it gave all of its mainframes, starting with zSeries S/390, the ability to run Linux. While mainframes had been virtualizing since the introduction of the VM operating system 30 years earlier, once IBM added Linux, you could run virtual x86 servers on a mainframe.

Over the past several years, some organizations have done just that, consolidating and virtualizing x86 servers using Linux on the mainframe. Once you start doing that, you have the basis for a private cloud.

"You have this incredibly scalable server that's very strong in transaction management," says Judith Hurwitz, president and CEO of Hurwitz & Associates, an IT consultancy in Needham, Mass. "Here's this platform that has scalability and partitioning built in at its core."

More here: http://www.infoworld.com/d/data-center/new-job-mainframes-hosting-private-clouds-329

Cloud architecture: Questions to ask for reliability

How do know your cloud provider has the right web-services architecture? Gregory Machler offers these questions to ask

Gregory Machler (CSO (US))

I've been an architect on some complex applications and I have a significant concern about assessing architectural risk for public/private cloud applications. Traditional risk assessments focus on external/internal access to confidential information like social security numbers, credit card number, and for banks PINs for the ATMs. Access controls and network protection are high priorities because they suppress the risk.

I'm interested in something a little different -- I'll call it architectural reliability. The desire is to avoid single points of failure for critical applications so that catastrophic errors don't occur; those lead to huge financial losses and a diminished corporate brand. So, where would I start to shore up the architecture? Here are some storage and networking diagnostic questions I would ask for the top-10 applications within a corporation. Note that some questions that need to be asked are pertinent to all applications and some just within a given domain. I'm going to focus on just the storage and networking product domains that support the top-10 applications.

Storage Architecture -- All Applications

1. Is only one SAN vendor used for storage of all of the applications?

2. How is data de-duplication addressed?

3. Is only one SAN switch vendor used for all of the applications?

4. Is only one data replication vendor used?

5. Is only one encryption vendor used to encrypt data for all of the applications?

6. Which encryption algorithm is used for a given encryption tool?

7. Is only one PKI vendor used to manage certificates?

8. Where are the certificates related to data at rest encryption stored?

Storage Architecture -- Each Application

1. What storage subsystem does the application run on?

2. Which other applications run on the same subsystem?

3. Is the data on the storage subsystem replicated elsewhere or is this the only copy?

4. How is the need for more data storage addressed for a given application?

5. What SAN switch is used for traffic to/from the storage subsystem?

6. What network components are used to replicate SAN data from one data center to another remote data center?

7. What is the application that performs data replication?

8. What is the software version and release for the data replication application?

9. Which encryption vendor is used to encrypt Confidential data on a given storage subsystem?

10. Does the storage for the encryption tool also run on a SAN shared with other applications?

11. Can corruption of the encryption data affect multiple applications or just this application?

12. What PKI vendor is used?

13. What version and release of PKI software is deployed?

14. Network Architecture -- All Applications

15. Is there only one switch/router vendor?

16. Is there only one firewall vendor?

17. Is there only one Intrusion Protection System/Intrusion Detection System (IPS/IDS) vendor?

18. Is there only one load balancer vendor?

19. Is there just one telecommunications vendor to the internet and/or WAN (Wide Area Network)?

Network Architecture -- Each Application

1. Which switch/routers are used within the data center?

2. Which switch/router models are used?

3. Are the switch/routers in an architecturally redundant design?

4. What version of embedded software and model of hardware is used in switch/router deployment?

5. Which firewall vendor is used?

6. What models of firewalls are deployed in the data center?

7. Are there a limited number of firewall permutations that are deployed? (embedded OS version, hardware model, features)?

8. What intrusion protection/detection products are deployed?

9. Which intrusion protection/detection vendors are used?

10. What permutations of IPS/IDS are deployed in the data center?

11. What version of IPS/IDS software is deployed?

12. Which vendor's load balancers are used?

13. Which load balancer model is used?

14. What is the version of the load balancer's embedded software and model of hardware?

15. Are they used to steer traffic between different global data centers?

16. Are the load balancers redundant, could one instantly take the place of another?

17. What telecommunications vendors are used for internet access?

18. What WAN telecommunications vendor is used for traffic between data centers?

19. What WAN telecommunications vendor is used for traffic between offices and the data center?

20. Is the telecommunications equipment redundant?

21. Is the telecommunications fiber underground physically separate?

These questions cover a large chuck of storage and networking diagnostic questions. I'm sure that I've missed some; but this should provide a flavor of what the critical web applications are using within the infrastructure cloud layer. These questions give insight into whether or not failure in a given product would affect multiple applications. It helps companies design and tune the architecture properly so that redundancy can be created in all products where possible. Then the failure of a given product does not cascade to multiple critical applications. It is very likely that it is much cheaper to over-engineer, thereby anticipating and reacting well to failure, than it is to have very expensive cloud services downtime.

The questions associated with whether or not only one vendor is used for a given product type reveals a potential enterprise weakness. Full reliance on one vendor can lead to significant failure if a specific product hardware/software release is flawed and occurs under stressful conditions only. Then, all cloud applications that use that product would be impacted negatively. The other questions address what I'll call use congestion. Multiple applications are sharing the same component (storage subsystem, server, or firewall). The product failure affects all those applications simultaneously.

In summary, this article focuses on architectural reliability. It creates a set of questions just focused on products within the storage domain, encryption of data-at-rest, and the networking domain. Since the cost of products is much cheaper than application downtime over-engineering is encouraged where possible. The need to deploy more product vendors must be balanced with a need to limit product and feature permutations so that realistic disaster recovery scenarios can be tested. Please see a previous article that I wrote on this. I'll visit other cloud layer diagnostic questions in the next article.

source: computerworld.com

Azure: it's Windows but not as we know it

Moving an application to the cloud

By Tim Anderson

If Microsoft Azure is just Windows in the cloud, is it easy to move a Windows application from your servers to Azure?

The answer is a definite “maybe”. An Azure instance is just a Windows virtual server, and you can even use a remote desktop to log in and have a look. Your ASP.NET code should run just as well on Azure as it does locally.

But there are caveats. The first is that all Azure instances are stateless. State must be stored in one of the Azure storage services.

Azure tables are a non-relational service that stores entities and properties. Azure blobs are for arbitrary binary data that can be served by a content distribution network. SQL Azure is a version of Microsoft’s SQL Server relational database.

Reassuringly expensive

While SQL Azure may seem the obvious choice, it is more expensive. Table storage currently costs $0.15 per GB per month, plus $0.01 per 10,000 transactions.

SQL Azure costs from $9.99 per month for a 1GB database, on a sliding scale up to $499.95 for 50GB. It generally pays to use table storage if you can, but since table storage is unique to Azure, that means more porting effort for your application.

What about applications that cannot run on a stateless instance? There is a solution, but it might not be what you expect.

The virtual machine (VM) role, currently in beta, lets you configure a server just as you like and run it on Azure. Surely that means you store state there if you want?

In fact it does not. Conceptually, when you deploy an instance to Azure you create a golden image. Azure keeps this safe and makes a copy which it spins up to run. If something goes wrong, Azure reverts the running instance to the golden image.

This applies to the VM role just as it does to the other instances, the difference being that the VM role runs exactly the virtual hard drive (VHD) that you uploaded, whereas the operating system for the other instant types is patched and maintained by Azure.

Therefore, the VM role is still stateless, and to update it you have to deploy a new VHD, though you can use differencing for a smaller upload.

If your application does expect access to persistent local storage, the solution is Azure Drive. This is a VHD that is stored as an Azure blob but mounted as an NTFS drive with a drive letter.

You pay only for the storage used, rather than for the size of the virtual drive, and you can use caching to minimise storage transaction and improve performance.

No fixed abode

The downside of Azure drive is that it can be mounted by only one instance, though you can have that instance share it as a network drive accessible by other instances in your service.

Another issue with Azure migrations is that the IP address of an instance cannot be fixed. While it often stays the same for the life of an instance, this is not guaranteed, and if you update the instance the IP address usually changes.

User management is another area that often needs attention. If this is self-contained and lives in SQL Server it is not a problem, but if the application needs to support your own Active Directory, you will need to set up Active Directory Federation Services (ADFS ) and use the .NET library called Windows Identity Framework to manage logins and retrieve user information. Setting up ADFS can be tricky, but it solves a big problem.

Azure applications are formed from a limited number of roles, web roles, worker roles and VM roles. This is not as restrictive as it first appears.

Conceptually, the three roles fulfill places for web applications, background processing and creating your own operating system build. In reality you can choose to run whatever you wish in those roles, such as installing Apache Tomcat and running Java-based web solution in a worker role.

For example, Visual Studio 2010 offers an ASP.NET MVC 2 role, but not the more recent ASP.NET MVC 3. It turns out you can deploy ASP.NET MVC 3 on Azure, provided the necessary libraries are fully included in your application. Even PHP and Java applications will run on Azure.

Caught in the middle

Middleware is more problematic. Azure has its own middleware, called AppFabric, which offers a service bus, an access control service and a caching service. At its May TechEd conference, Microsoft announced enhanced Service Bus Queues and publish/subscribe messaging as additional AppFabric services.

As Azure matures, there will be ways to achieve an increasing proportion of middleware tasks, but migration is a substantial effort.

Hines: Designing for Azure up-front

Nick Hines is chief technical officer of innovation at Thoughtworks, a global software developer and consultancy which is experimenting with Azure.

One migration Hines is aware of is an Australian company that runs an online accounting solution for small businesses. Hines says the migration to Azure was not that easy. The company found incompatibilities between SQL Azure and its on-premise SQL Server.

“While Microsoft claims you can just pick up an application and move it onto Azure, the truth is it’s not that simple,” Hines says.

“To really get the benefit, in terms of the scale-out and so on, designing it for Azure up front is probably a much better idea.

“But the same could be said for deploying an application on Amazon Web Services, to be fair to Microsoft.”

Fun and games in userland

A brief history of virtualisation

By Liam Proven • Get more from this author

Posted in IT Management, 18th July 2011 01:00 GMT

Operating-system level virtualisation

As we explained in part 2 of this series, A brief history of virtualisation, in the 1960s, it was a sound move to run one OS on top of a totally different one. On the hardware of the time, full multi-user time-sharing was a big challenge, which virtualisation neatly sidestepped by splitting a tough problem into two smaller, easier ones.

Within a decade, though, a new generation of hardware made it easy enough that a skunkworks project at AT&T was able to create a relatively small, simple OS that was nonetheless a full multi-user, time-sharing one: Unix.

After its early years as a research project, Unix spent a few decades as a proprietary product, with dozens of competing companies offering their own versions – meaning that it splintered into many incompatible varieties. Each company implemented its own enhancements and then its rivals would copy it to create their own versions.

Fairly late in this process, a new form of virtualisation emerged as one of these features. First implemented as part of FreeBSD 4 in 2000, a version came to Linux in 2001, Sun Solaris in 2005 and IBM AIX in 2007. Each Unix calls it by a different name and has slightly different functionality, but the overall concept is the same.

It springs from a far simpler piece of Unix functionality – the humble chroot command, which dates back all the way to Version 7 Unix in 1979. For those Windows-only types out there, a tiny bit of Unix background is needed at this point.

One big directory tree

In all flavours of Unix, there is just one, system-spanning directory tree, starting at the root directory. On CP/M and DOS and Windows, the base level of storage is an assortment of drive letters, on each of which is a directory tree.

Unix does things the other way round: there’s one big directory tree, starting at the root directory – called just “/” – and disk partitions and volumes appear as directories within it.

What chroot lets you do is transform a subdirectory into the root filesystem just for one particular process. You build a skeletal Unix filesystem containing only whatever files are necessary for that process to run, then imprison that process – and any subprocesses it might create – within it, so that it can no longer see the whole directory tree, just its particular subtree.

In essence, then, it virtualises just the filesystem: it doesn't protect the system against a program with superuser – ie, administrative – rights, but it is very handy for testing purposes.

The chroot command proved to be such a useful tool that in time it was extended into a more complete form, which also virtualised the memory space, I/O and so on of the operating system. The result is that a process locked inside the virtual environment seemed to have the whole computer to itself.

To understand how this works, consider how modern operating systems work. In most processors, there are at least two privilege levels at which code can execute, which are generally called something like kernel space and user space.

Code running in kernel space – usually the OS kernel and any essential device drivers – is in direct control of the hardware and can directly manipulate peripherals and so on. In contrast, code running in user space can’t – it just gets given its own block of memory, which is all it’s allowed to access, and it has to ask the kernel nicely for I/O. On x86 chips, kernel code runs at a level called Ring 0 and user code in Ring 3, and the levels in between are left unused.

Splitting up

There's only one kernel, and generally, in most systems, there's only one big program running in user space. Sometimes it's called "userland", and it encompasses all the bits that you actually interact with.

As far as the kernel is concerned, userland can effectively be considered as one big program. One original parent process – on Unix boxes, traditionally called init – starts up all the rest and thus is the parent of the whole tree of dozens to hundreds of others.

So if you set things up so that the kernel is able to run more than one userland at a time, you can effectively virtualise the whole visible face of the OS. So long as the primary copy stays in control of the filesystem and the secondary copies are penned up in subdirectories, you can suddenly split your computer into multiple identical “virtual environments” (VEs).

There’s only one copy of the actual OS installed and only one kernel running, but you can have lots of separate root directories and install whatever you like in each of them without it affecting the others.

Each one starts with a skeletal copy of the filesystem with just the essential files it needs – which is what you do with the chroot command anyway – and then it can put whatever it likes, wherever it likes, and it all stays neatly penned up and separate from all the other software on the computer.

This is called “operating-system level virtualisation” or “kernel-level isolation.”

Every sysadmin's dream

No more “DLL hell,” no more clashing system requirements, no more trying to untangle which directories or files belong to which app. Apps are completely isolated, simplifying management – for instance, they can be removed without a trace, as every file the app ever wrote to disk is locked inside its VE.

So far, so good. Sounds like running a few Windows VMs on a server, doesn’t it?

But it isn’t. Because at the same time, there’s only a single install of a single OS to configure, patch and update; one set of device drivers; and rather importantly if you’re running a commercial OS, one licence to pay for.

No more “DLL hell,” no more clashing system requirement

To anyone who’s ever been a sysadmin, it’s a dream come true. Every app on the machine is locked away from every other one in its own little walled garden.It’s also very different from a performance or administration point of view – each VE is equivalent to just a program, rather than a whole OS instance. You don’t need to allocate storage to VEs – they all share the same pool of memory and disk space, managed by the kernel, so it is vastly more efficient to run multiple VEs than full VMs.

A dozen copies of a full OS under a hypervisor means thirteen times the hardware resources needed by one – so suddenly you need a dual-socket eight-core server with 32GB of RAM to make it all work. Not so with VEs – it only needs the resources for one OS plus the dozen apps.

It must be admitted that this approach doesn’t work for every type of program. If an application has to modify the kernel or change its behaviour, or needs kernel privileges, then you can’t run it inside a virtual environment, because that would affect all of them – so certain apps need to run in the base or parent environment. You can’t just install absolutely anything alongside anything else. Some don’t get along and won’t share.

But overall, VEs are a very useful and powerful tool. The snag is that at the moment, you only get this if you’re running a version of Unix with long trousers.

Solaris 10 calls VEs Zones, management by Containers, and it’s a very powerful implementation. For instance, each zone can have its own network interfaces and sets of user IDs.

Zones can be bound to particular processors for performance optimisation, but they don’t need a dedicated one, nor must they be allocated any memory. The OS not only supports zones offering the native Solaris API but also ones emulating older versions of Solaris as well as Linux-branded zones.

AIX 6.1 does it, too; IBM call them “workload partitions” – WPARs for short – as opposed to LPARs, IBM’s name for full-system virtualisation, as we described in the previous article. WPARs offer several levels of isolation, from some shared resources to none, right down to a single process. A running WPAR can even be migrated onto a different host server.

And for the Free Software user, FreeBSD offers "jails". Jails don’t have all the bells and whistles of their commercial rivals – you just get multiple instances of the same version of the same OS – but are still a very useful tool. Most of the other BSDs shared a similar implementation under the name "sysjails", but an insecurity in their implementation caused development to stop in 2009.

With Linux, the situation is more complex. VEs are not a standard part of the Linux kernel, but there are multiple competing tools offering variations on the same functionality. The newest and possibly simplest is LXC (“Linux Containers”), which builds on the cgroups functionality that’s been built into the kernel since 2.6.29. Rather more mature is Linux-VServer, which is sufficiently robust to allow other distributions’ userlands to be started inside a VE.

Parallel worlds

Probably the most capable for now is OpenVZ, which allows VEs to have their own network and I/O devices. OpenVZ is the basis of Parallels’ commercial Virtuozzo Containers product and its development is sponsored by Parallels.

Aimed at service providers, Virtuozzo builds on OpenVZ with additional management and provisioning tools. It can support a higher density of containers with closer management of their resources and it integrates with Parallels’ Plesk management tools.

Interestingly, Virtuozzo also runs on Windows. Parallels is not as well-known in PC virtualisation circles as it is on Linux and on the Mac, where its Parallels Desktop product brought several new features to Mac users wishing to run Windows. Virtuozzo Containers for Windows brings Unix-style partial virtualisation to Microsoft’s platform.

Interestingly, Virtuozzo also runs on Windows. Parallels is not as well-known in PC virtualisation circles as it is on Linux and on the Mac, where its Parallels Desktop product brought several new features to Mac users wishing to run Windows. Virtuozzo Containers for Windows brings Unix-style partial virtualisation to Microsoft’s platform.

Each container takes only about 60MB of files, but appears from the management console to be a complete, independent machine – you can even assign it its own IP address and connect to it with Remote Desktop. Obviously, all the containers on a host run the same OS as the host itself, but the memory and disk footprint is dramatically reduced as you're only running a single OS instance.

Virtuozzo or something like it might yet cause a small revolution in PC virtualisation. If it does, going by the company's history, it’s likely that the technique will be imitated by Microsoft itself. Partly because that's what it's currently doing with Hyper-V, which is progressively acquiring more and more of the features of VMware’s VSphere and VCenter management tools. Mostly, though, because Microsoft is in the best position to incorporate OS-level virtualisation into its own OS.

To be fair, the notion of OS-level virtualisation is not a Parallels innovation – as we've discussed, it's been around for more than a decade and has been implemented in multiple OSs. Parallels is just the first company to make it happen on Windows. If the concept were to catch on in the Windows world, it would make virtualisation a great deal simpler, faster and more efficient.

In the next part of this series, we will look at the state of the virtualisation market today – and in the final one, where it might go next. For the historically-inclined, one of the only PC OSs ever to actually use more than rings 0 and 3 was IBM's OS/2. Its kernel ran in ring 0 and ordinary unprivileged code in ring 3, as usual, but unprivileged code that did I/O rang in ring 2.

This is why OS/2 won’t run under Oracle’s open-source hypervisor VirtualBox in its software-virtualisation mode, which forces Ring 0 code in the guest OS to run in Ring 1. ®

Source: http://www.theregister.co.uk/2011/07/18/brief_history_of_virtualisation_part_3/page2.html

Magic Quadrant for x86 Server Virtualization Infrastructure 2011

VMWare is under pressure for sure.

Magic Quadrant for x86 Server Virtualization Infrastructure 2011

http://www.citrix.com/site/resources/dynamic/additional/citirix_magic_quadrant_2011.pdf

or

http://www.gartner.com/technology/media-products/reprints/microsoft/vol2/article8a/article8a.html

As of mid-2011, at least 40% of x86 architecture workloads have been virtualized on servers; furthermore, the installed base is expected to grow five-fold from 2010 through 2015 (as both the number of workloads in the marketplace grow and as penetration grows to more than 75%). A rapidly growing number of midmarket enterprises are virtualizing for the first time, and have several strong alternatives from which to choose. Virtual machine (VM) and operating system (OS) software container technologies are being used as the foundational elements for infrastructure-as-a-service (IaaS) cloud computing offerings and for private cloud deployments. x86 server virtualization infrastructure is not a commodity market. While migration from one technology to another is certainly possible, the earlier that choice is made, the better, in terms of cost, skills and processes. Although virtualization can offer an immediate and tactical return on investment (ROI), virtualization is an extremely strategic foundation for infrastructure modernization, improving the speed and quality of IT services, and migrating to hybrid and public cloud computing.

Magic Quadrant for x86 Server Virtualization Infrastructure 2010

http://www.vmware.com/files/pdf/cloud/Gartner-VMware-Magic-Quadrant.pdf

Compare 2011 vs. 2010.

The cloud is like MMA; VMware, Citrix in main event

By Rodney Rogers

I’m an MMA fan. The sport of mixed martial arts combines the multiple disciplines of wrestling, boxing, and jiu jitsu into one combat sport fought in an eight-sided cage called the octagon. These athletes are modern-day gladiators. Most come from a core background in one discipline (a collegiate wrestler, for example) and then have to develop secondary skills – boxing and jiu jitsu in this case – to really become professionally competitive in the sport.

I’m an MMA fan. The sport of mixed martial arts combines the multiple disciplines of wrestling, boxing, and jiu jitsu into one combat sport fought in an eight-sided cage called the octagon. These athletes are modern-day gladiators. Most come from a core background in one discipline (a collegiate wrestler, for example) and then have to develop secondary skills – boxing and jiu jitsu in this case – to really become professionally competitive in the sport.

I am also an entrepreneur, technologist and CEO. For me, announcements this past Tuesday in the cloud world are a metaphor for two highly trained MMA fighters stepping into the ring after a two-year training camp spent developing their secondary disciplines in preparation to battle for Cloud Service Provider (CSP) supremacy.

I am, of course, talking about Citrix’s acquisition of Cloud.com and VMware’s announcement of vSphere 5 as the foundation of its move toward a comprehensive cloud OS. To me, both announcements foreshadow three major shifts that will occur in the coming years in the cloud computing and CSP space:

1. A cloud OS will reign. The versions of Windows, Linux, Solaris, AIX, UX, etc., as we know them today will be rendered useless by the science of cloud computing. This will take some time, and perhaps elements of this layer will be repurposed, but both the hypervisor and the application will incrementally subsume functionality historically performed by the OS. We will see more intelligently pre-configured virtual machines (VMs) running workloads for increasingly intelligent applications pre-configured for a particular hypervisor.

We are already starting to see this with JEOS (Just Enough Operating System) VMs that intelligently adjust for attributes such as memory allocation through their interaction with the hypervisor. Dynamic resource allocation to VMs and the ability of the applications to drive such requests will represent the infrastructure of the future. This will be a core shift in the cloud computing space and will drive the orientation of the foundational levels of the cloud stack, Infrastructure as a Service and Platform as a Service.

This is like having a core base skill of wrestling for an MMA fighter. All other skills sets leverage the base. A case could be made that VMware is the better wrestler today given its heritage of innovation at the proprietary hypervisor and hypervisor service-provisioning level. On the other hand, it could be argued that Citrix’s acquisition of Cloud.com — which has enabling IaaS software IP that will undoubtedly provide the base to Citrix’s efforts to build out its own open source-based cloud OS (perhaps augmenting or even usurping its own Project Olympus efforts) — gives it the better wrestling skills.

This is like having a core base skill of wrestling for an MMA fighter. All other skills sets leverage the base. A case could be made that VMware is the better wrestler today given its heritage of innovation at the proprietary hypervisor and hypervisor service-provisioning level. On the other hand, it could be argued that Citrix’s acquisition of Cloud.com — which has enabling IaaS software IP that will undoubtedly provide the base to Citrix’s efforts to build out its own open source-based cloud OS (perhaps augmenting or even usurping its own Project Olympus efforts) — gives it the better wrestling skills.

Too close to call at this point. They have two different styles of wrestling.

2. The hybrid cloud will become the most important cloud. Most of the dialogue today is about which cloud is best for a particular enterprise when considering the choice of commodity clouds (Internet-facing web-scaling clouds such as Amazon Web Services), public clouds (multi-tenant clouds that can either be Internet- or private-access-based) or private clouds (essentially, dedicated single-tenant virtualized technology).

Key considerations are the obvious ones: security, performance, elasticity, etc. Greenfield applications generally have entirely different requirements than legacy back-office application migration (a space more commonly being referred to now as the enterprise cloud). It goes on and on.

The space is entirely over-marketed in a self-serving manner toward the strengths or away from the weaknesses of the CSPs doing the marketing. All of that will get sorted out in the next 18 to 24 months. Simply put, the best technologies for the required use case will win. If not, shame on the buyer.

As a CSP, the real juice in forward markets will be in defining and controlling the hybrid cloud. By hybrid cloud, I mean the heterogeneous solution of on-premise private clouds interacting with off-premise public clouds. The better the tools are to build on-premise private clouds, the more bursting will occur into off-premise public clouds. It will be a virtuous cycle.

Today, there are very few companies that functionally make money on both ends of that spectrum. I look at both the Citrix and VMware announcements as moves toward filling this future hybrid cloud opportunity. Citrix moves toward this in its acquisition of Cloud.com, whose software assets have been used to build such scaled private clouds as Zynga’s Z Cloud. VMware moves toward this simply by realizing it is no longer good enough to own the private cloud hypervisor market. It is now looking to develop true cloud-management products.

They both have pieces of the off-premise puzzle (and plenty of capital to develop it further) but neither is there yet. This is where this race will be. This area will require both skill and endurance and a tough chin, similar to what the art of boxing means to the MMA fighter. Given VMware’s massive share of the enterprise hypervisor market, it gets the nod as the better boxer at this point, but it’ll have to be wary of Citrix’s open source agility.

3. CSPs will need it all. It’s obvious that having a full cloud stack will matter, but it will need to be integrated in a manner that does not exist today and will broaden its definition. Having an enterprise-grade hypervisor in this fight is sort of like breathing: It will keep you alive, but won’t get your arm raised in victory.

Both VMware and Citrix have battle-tested hypervisors in ESXi/vSphere and XenServer, respectively. Both have data center assets and now IaaS and cloud OS capabilities. Both have management tools. Both are in their early stages with their PaaS offerings, with VMware’s recent launch of Cloud Foundry (interestingly, an open source PaaS) and Citrix’s OpenCloud, which leverages OpenStack.

Both also have a software portfolio, but this is where I think Citrix separates itself in a number of areas. One example is the network/WAN optimization for on-premise to off-premise connectivity noted above. The maturity of Citrix’s HDX technologies in conjunction with Xen Desktop have focused on solving the “last mile” of on-premise user experience with off-premise workload processing.

But all of these components must be interconnected. This is about a breadth of skill sets that work together in a “cloud spectrum” of sorts. This is similar to the breadth of specific technical skills that the MMA fighter must master in the art of jiu jitsu. At this stage, I give the nod to Citrix for having the better jiu jitsu.

But all of these components must be interconnected. This is about a breadth of skill sets that work together in a “cloud spectrum” of sorts. This is similar to the breadth of specific technical skills that the MMA fighter must master in the art of jiu jitsu. At this stage, I give the nod to Citrix for having the better jiu jitsu.

VMware and Citrix are premium fighters

However, there are many other contenders in the division. The list is long. Don’t count out AWS with its impressively consistent feature release cycles. Rackspace has approximately $1 billion of dedicated hosting and managed services clients to direct to the virtues of OpenStack. Microsoft, IBM and others have well-known component solutions but have demonstrated a lack of agility, among other things, to enable the true spectrum. There are contenders in Red Hat, Joyent, Virtustream and many others.

There will be no shortage of opportunities for very smart component technologies. The major players will either have to develop these things or acquire them. Boxers must learn to grapple, kick, and employ submission techniques. Wrestlers must learn to strike effectively and attack from their guard. One-dimensional CSPs need to become effective three-dimensional CSPs in order to win a belt.

VMware and Citrix just look like the top contenders right now. Yesterday was the weigh-in for their title fight. But expect more days like last Tuesday.

Rodney Rogers is co-founder and CEO of Virtustream.

Image courtesy of Flickr user fightlaunch.

Cloud and Performance… Myths and Reality

by David Linthicum@Microsoft

First the myths:

Myth One: Cloud computing depends on the Internet. The Internet is slower than our internal networks. Thus, systems based on IaaS, PaaS, or SaaS clouds can’t perform as well as locally hosted systems.

Myth Two: Cloud computing forces you to share servers with other cloud computing users. Thus, in sharing hardware platforms, cloud computing can’t perform as well as locally hosted systems.

Let’s take them one at a time.

First, the Internet thing. Poorly designed applications that run on cloud platforms are still poorly designed applications, no matter where they run. Thus, if you design and create “chatty” applications that are in constant “chatty” communications from the client to the server, latency and thus performance will be an issue, cloud or not.

If they are designed correctly, cloud computing applications that leverage the elastic scaling of cloud-based systems actually provide better performance than locally hosted systems. This means decoupling the back-end processing from the client, which allows the back-end processing to occur with minimal communications with the client. You’re able to leverage the scalability and performance of hundreds or thousands of servers that you allocate when needed, and de-allocate when the need is over.

Most new applications that leverage a cloud platform also understand how to leverage this type of architecture to take advantage of the cloud-delivered resources. Typically they perform much better than applications that exist within the local data center. Moreover, the architecture ports nicely when it’s moved from public to private or hybrid clouds, and many of the same performance benefits remain.

Second, the sharing thing. This myth comes from the latency issues found in older hosting models. Meaning that, we all had access to a single CPU that supported thousands of users, and thus the more users on the CPU, the slower things got. Cloud computing is much different. It’s no longer the 1980s when I was a mainframe programmer watching the clock at the bottom of my 3270 terminal as my programs took hours to compile.

Of course cloud providers take different approaches to scaling and tenant management. Most leverage virtualized and highly distributed servers and systems that are able to provide you with as many physical servers as you need to carry out the task you’ve asked the cloud provider to carry out.

You do indeed leverage a multitenant environment, and other cloud users work within the same logical cloud. However, you all leverage different physical resources for the most part, and thus the other tenants typically don’t affect performance. In fact, most cloud providers should provide you with much greater performance considering the elastic nature of clouds, with all-you-can-eat servers available and ready to do your bidding.

Of course there are tradeoffs when you leverage different platforms, and cloud computing is no different. It does many things well, such as elastic scaling, but there are always those use cases where applications and data are better off on traditional platforms. You have to take things on a case-by-case basis, but as time progresses, cloud computing platforms are eliminating many of the platform tradeoffs.

Applications that make the best use of cloud resources are those designed specifically for cloud computing platforms, as I described above. If the application is aware of the cloud resources available, the resulting application can be much more powerful than most that exist today. That’s the value and potential of cloud computing.

Most frustrating to me is that, other than the differences between simple virtualization and cloud computing, I’ve become the cloud computing myth buster around cloud computing performance. In many respects the myths about performance issues are a bit of FUD created by internal IT around the use of cloud computing, which many in IT view as a threat these days. Fortunately, the cloud is getting much better press as organizations discover proper fits for cloud computing platforms, and we choose the path to the cloud for both value as well as performance.

By the way, this is my last article in this series. I enjoyed this opportunity to speak my mind around the emerging cloud computing space, and kept to the objectives of being candid, independent thinking, and providing an education.

I’m a full time cloud computing consultant by trade, working in a company I formed several years ago called Blue Mountain Labs. I formed Blue Mountain Labs to guide enterprises though the maze of issues to cloud computing, as well as build private, public, and hybrid clouds for enterprises and software companies. You can also find in my the pages of InfoWorld, where I’m the cloud blogger, and I do my own Podcast called the Cloud Computing Podcast.

Again, I want to thank everyone. Good luck in the cloud.

Citrix eyes 50 times faster services

NEW DELHI: Two days after Citrix Systems announced acquisition of Cloud.com, analysts feel the move may make the company one of the leading providers of cloud computing infrastructure in India. Citrix is already considered to have major expertise in providing virtualization services and experts feel that after buying Cloud.com it will be able to offer a solution to companies looking to move to cloud computing.

Cloud computing is the latest buzzword in the IT industry as it is expected to "free" people from their desktop computers and let them access data services anytime, anywhere with the help of an internet connection."As I see it, currently chief information officers, the people who look after IT departments in their companies, are most pre-occupied with virtualization. Cloud computing is third priority on their list," said Biswajeet Mahapatra, research director for Gartner and an expert on enterprise IT.

Citrix Systems on July 12 announced it had completed the acquisition of Cloud.com, a market leading provider of software infrastructure platforms for cloud providers. Citing research report by IDC, the company said transition from the PC era to the cloud era is expected to fuel a massive build-out in cloud infrastructure, creating a market that may exceed $11 billion by 2014.

"With the addition of Cloud.com, Citrix will be able to offer services up to 50 times faster, at one fifth the cost of alternative solutions," said Ravi Gururaj, V-P of engineering at Citrix.

Citrix Buys Cloud.com for More Than $200 Million; Redpoint Is on a Roll

TechCrunch has learned that Citrix Systems is buying Cloud.com for between $200 million and $250 million. The deal should be announced within the hour. Cloud.com gives companies their own private EC2-like infrastructure. The team has built the company in just a few years, boasting massive clients with demanding infrastructure needs like Zynga, Tata, and other huge undisclosed tech names. Cloud.com was funded by Redpoint Ventures, Nexus Capital and Index Ventures.

TechCrunch has learned that Citrix Systems is buying Cloud.com for between $200 million and $250 million. The deal should be announced within the hour. Cloud.com gives companies their own private EC2-like infrastructure. The team has built the company in just a few years, boasting massive clients with demanding infrastructure needs like Zynga, Tata, and other huge undisclosed tech names. Cloud.com was funded by Redpoint Ventures, Nexus Capital and Index Ventures.

Of course the big question is with things going so well, why would Cloud sell? In a Valley where companies are either huge and take forever to build or wind up being a quick flip worth less than $100 million, deals this size have become rare. And closing one just two years after its first venture round having raised just $20 million in funding is even rarer. Perhaps the offer was just too life changing for the entrepreneurs to pass up. Who are we to judge that?

This is another exit for Redpoint Ventures who was the first money in and is having quite a year. Clearwell was bought by Symantec for $390 million. Qihoo went public and is now boasting a $2.5 billion market capitalization. Home Away went public too; it’s now worth $3.3 billion, and Redpoint owns 26% of it. Responsys also went public and is valued at $760 million. Redpoint was the first money in Cloud.com, so although the purchase price isn’t as big, it’s still a nice multiple.